Definition

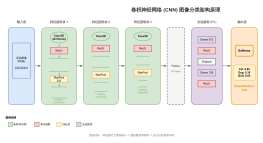

Use Convolution Operation in place of General Matrix Multiplication

Neural Network

Deal with Visual Imagery Originally

Structure Timeline

Attention

Residual Attention Module

2018

Feature Map Exploitation

Multi-Path Connectivity

Parameter Optimisztion

Feature visualisation

Depth Exploitation

Spatial Exploitation

Programming ImageNet

2010

CNN Stagnation

Early 2000

Depth Revolution

Skip Connection

2015

ResNet

ResNet18

ResNet34

ResNet50

ResNet101

ResNet152

VGG

2014

Effective Receptive Field (Small Size Filters)

VGG-19

VGG-16

GoogleNet

2014

Factorization

Inception-ResNet-v2

Inception-ResNet-v1

Inception V4

Inception V3

BottleNeck

Inception V2

Inception V1

Inception Block

Parallelism

Spatial Exploitation

AlexNet

2012

Squeeze Net

Shuffile Net

Buzz words

Pooling

max Pooling

average Pooling

stochastic pooling

Pooling Size

<span class="equation-text" contenteditable="false" data-index="0" data-equation="2 \times 2~dimension"><span></span><span></span></span>

Mask Matrix

Feature Map

Convolutional layers

an input layer

hidden layers

an output layer

Filter

Above 2D, Normally 3D

Stride

Padding

Dilation

Weights

Parameters

Parameter sharing

Local connectivity

Spatial arrangement

Early stopping

Added regularizer

weight decay

max norm constraints

Receptive Field

Fine-tuning

Human interpretable explanations

Residual Connection

Factorization

Downsamping

Upsampling

Attention

Feature Invariant

Normalisztion

Local Response Normalization

Data Augmentation

Optimizer

Exponentially weighted average

bias correction in exponentially weighted average

momentum

Nesterov Momentum

Adagrad

Adadelta

RMSprop

Adam

Convergence

Transfer

Gradient Descent

Batch gradient descent

Mini-batch gradient descent

stochastic gradient descent

Activation Function

SoftPlus

SoftMax

Tanh

Sigmoid

Bias_add

Dropout (Neuro)

dropout rate

range=[0,1)

empirally set to [0.3,0.5]

before dropout

<span class="equation-text" data-index="0" data-equation="Z_i^{l+1}" contenteditable="false"><span></span><span></span></span><span class="equation-text" data-index="1" data-equation="= w_i^{l+1}\times y^l" contenteditable="false"><span></span><span></span></span> <span class="equation-text" contenteditable="false" data-index="2" data-equation="+ b_i^{l+1}"><span></span><span></span></span>

<span class="equation-text" contenteditable="false" data-index="0" data-equation="y_i^{l+1} = f(z_i^{l+1})"><span></span><span></span></span>

after dropout

<span class="equation-text" contenteditable="false" data-index="0" data-equation="r^l \sim Bernoulli(p)"><span></span><span></span></span>

<span class="equation-text" contenteditable="false" data-index="0" data-equation="\widetilde{y}^l = r^l \times y^l"><span></span><span></span></span>

<span class="equation-text" contenteditable="false" data-index="0" data-equation="z_i^{l+1} = w_i^{l+1}\widetilde{y}^l +b_i^{i+1}"><span></span><span></span></span>

<span class="equation-text" contenteditable="false" data-index="0" data-equation="y_i^{l+1} = f(z_i^{l+1})"><span></span><span></span></span>

rescale rate

rescale rate = 1 / (1 - dropout rate)

DropConnect

DepthConcat

Forward

Backpropagation

Applications

Image recognition

Video analysis

Natural language processing

Anomaly Detection

Drug discovery

Health risk assessment

Biomarkers of aging discovery

Checkers game

Go

Time series forecasting

Cultural Heritage and 3D-datasets